This post is a condensed version of the original post by Andreas Jordan. Prerequisites were taken from sqlservercentral. They have compiled a nice and extensive list of them.

With SQL Server 2012, Microsoft introduced the AlwaysOn Availability Group feature, and since then many changes and improvements have been made. This article will cover the prerequisites and steps to install AlwaysOn in a SQL 2019 environment.

Prerequisites

Windows

- Do not install AlwaysOn on a domain controller

- The operating system must be Windows 2012 or later

- Install all available Windows hotfixes on every server including replicas

- Windows Server Failure Cluster must be installed on every server (I will include powershell code to install it using dbatools module)

SQL Server

- Each server must be a node in the WFSC

- No replica can run Active Directory services

- Each replica must run on comparable hardware that can handle identical workloads

- Each instance must run the same version of SQL server, and have the same SQL server collation

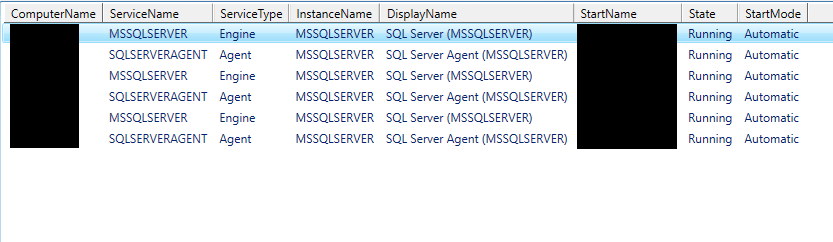

- The account that runs SQL Services should be a domain account

Network

- It is recommended to use the same network links for communication between WFSC nodes and AlwaysOn replicas

Databases in the AG

- user databases (you cannot replicate system databases)

- read/write

- multi-user

- AUTO_CLOSE disabled

- databases should be to set full recovery mode

- not configured for database mirroring

Step 1: Add Windows Failover Cluster to each server

Define the variables

#Define the variables for SQLCluster

$DomainName = 'DOMAIN'

$DomainController = 'DC1.com'

$Networkshare = 'FS01.com'

$ClusterNodes = 'SQLSERVER01.com', 'SQLSERVER02.com','SQLSERVER03.com'

$ClusterName = 'SQLSERVERCL01'

$ClusterIP = '10.0.0.28'

Below code will install the failover cluster feature on all the nodes (servers/replicas whatever you want to call them)

#Install Failover cluster on all the nodes

Invoke-Command -ComputerName $ClusterNodes -ScriptBlock { Install-WindowsFeature -Name Failover-Clustering -IncludeManagementTools } | Format-Table

Move the servers to a fixed OU in Active directory (I have had to create an OU named SQL Always ON for this purpose)

Move-ADObject -Identity 'CN=SQLSERVER01,CN=Computers,DC=com,DC=local' -TargetPath 'OU=SQL Always ON,OU=Servers,DC=com,DC=local'

Move-ADObject -Identity 'CN=SQLSERVER02,CN=Computers,DC=com,DC=local' -TargetPath 'OU=SQL Always ON,OU=Servers,DC=com,DC=local'

Move-ADObject -Identity 'CN=SQLSERVER03,CN=Computers,DC=com,DC=local' -TargetPath 'OU=SQL Always ON,OU=Servers,DC=com,DC=local'

Next up is create the windows cluster, which is the basis for SQL AlwaysOn

#We do not have shared storage for the quorum in the environment

#Therefore, we will create the network share on the domain controller and authorize the computer account of the failover cluster we just created

Invoke-Command -ComputerName $DomainController -ScriptBlock {

New-Item -Path "C:\WindowsClusterQuorum_$using:ClusterName" -ItemType Directory | Out-Null

New-SmbShare -Path "C:\WindowsClusterQuorum_$using:ClusterName" -Name "WindowsClusterQuorum_$using:ClusterName" | Out-Null

Grant-SmbShareAccess -Name "WindowsClusterQuorum_$using:ClusterName" -AccountName "$using:DomainName\$using:ClusterName$" -AccessRight Full -Force | Out-Null

}

$Cluster | Set-ClusterQuorum -NodeAndFileShareMajority "\\$DomainController\WindowsClusterQuorum_$ClusterName" | Format-List

If you have reached this point, then you have completed the windows server level prerequisites.

Next step is to create the availability group. Before, we do that, we need to enable the AlwaysOn on SQL instances using the below code. Don’t you love not having to RDP into a machine and do all this manually!

#Define the variable for AlwaysOn group

$SQLServerServiceAccount = 'svc-sql-serviceaccount'

$Password = 'SecurePassword'

$BackupPath = '\FS01.com.local\SQLBackup\Temp'

$DatabaseName = 'DB01'

$AvailabilityGroupName = 'SQLAG01'

$AvailabilityGroupIP = '10.0.0.19'

#Enable Always On on SQL instances, typically done via SQL Server Configuration Manager

Enable-DbaAgHadr -SqlInstance $SqlInstances -Force | Format-Table

Next, setup the endpoints

#Setup of the endpoints

New-DbaEndpoint -SqlInstance $SqlInstances -Name hadr_endpoint -Port 5022 | Start-DbaEndpoint | Format-Table

New-DbaLogin -SqlInstance $SqlInstances -Login "$DomainName\$SQLServerServiceAccount" | Format-Table

Invoke-DbaQuery -SqlInstance $SqlInstances -Query "GRANT CONNECT ON ENDPOINT::hadr_endpoint TO [$DomainName\$SQLServerServiceAccount]"

Finally, we need to backup the database on primary and restore them onto each replica with norecovery in order to be able to join them to an availability group.

#Transfer databases to replica

$Database = Get-DbaDatabase -SqlInstance $SqlInstances[0] -Database $DatabaseName

$Database | Backup-DbaDatabase -Path $BackupPath -Type Database | Restore-DbaDatabase -SqlInstance $SqlInstances[1] -NoRecovery | Out-Null

$Database | Backup-DbaDatabase -Path $BackupPath -Type Log | Restore-DbaDatabase -SqlInstance $SqlInstances[1] -Continue -NoRecovery | Out-Null

Finally, create the availability group and resume the data movement

#Create the availability group

$AvailabilityGroup = New-DbaAvailabilityGroup `

-Name $AvailabilityGroupName `

-Database $DatabaseName `

-ClusterType Wsfc `

-Primary $SqlInstances[0] `

-Secondary $SqlInstances[1] `

-SeedingMode Automatic `

-IPAddress $AvailabilityGroupIP `

-Confirm:$false

$AvailabilityGroup | Format-List

Get-DbaAgReplica -SqlInstance $SqlInstances[0] -AvailabilityGroup $AvailabilityGroupName | Format-Table

Get-DbaAgDatabase -SqlInstance $SqlInstances -AvailabilityGroup $AvailabilityGroupName -Database $DatabaseName | Format-Table

Here is the complete code in one piece for your ease of copying it for implementation on your own environment. You will have to replicate the last piece of code in order to other databases. I have yet to experiment if I can pass multiple databases as parameters to backup-dbadatabase and join them to the AG.

#Define the variables for Windows SQLCluster

$DomainName = 'DOMAIN'

$DomainController = 'DC1.com'

$Networkshare = 'FS01.com'

$ClusterNodes = 'SQLSERVER01.com', 'SQLSERVER02.com','SQLSERVER03.com'

$ClusterName = 'SQLSERVERCL01'

$ClusterIP = '10.0.0.28'

#Install Failover cluster on all the nodes

Invoke-Command -ComputerName $ClusterNodes -ScriptBlock { Install-WindowsFeature -Name Failover-Clustering -IncludeManagementTools } | Format-Table

#Move the servers to a fixed OU in Active directory

Move-ADObject -Identity 'CN=SQLSERVER01,CN=Computers,DC=com,DC=local' -TargetPath 'OU=SQL Always ON,OU=Servers,DC=com,DC=local'

Move-ADObject -Identity 'CN=SQLSERVER02,CN=Computers,DC=com,DC=local' -TargetPath 'OU=SQL Always ON,OU=Servers,DC=com,DC=local'

Move-ADObject -Identity 'CN=SQLSERVER03,CN=Computers,DC=com,DC=local' -TargetPath 'OU=SQL Always ON,OU=Servers,DC=com,DC=local'

#We do not have shared storage for the quorum in the environment

#Therefore, we will create the network share on the domain controller and authorize the computer account of the failover cluster we just created

Invoke-Command -ComputerName $DomainController -ScriptBlock {

New-Item -Path "C:\WindowsClusterQuorum_$using:ClusterName" -ItemType Directory | Out-Null

New-SmbShare -Path "C:\WindowsClusterQuorum_$using:ClusterName" -Name "WindowsClusterQuorum_$using:ClusterName" | Out-Null

Grant-SmbShareAccess -Name "WindowsClusterQuorum_$using:ClusterName" -AccountName "$using:DomainName\$using:ClusterName$" -AccessRight Full -Force | Out-Null

}

$Cluster | Set-ClusterQuorum -NodeAndFileShareMajority "\\$DomainController\WindowsClusterQuorum_$ClusterName" | Format-List

#Define the variable for AlwaysOn group

$SQLServerServiceAccount = 'svc-sql-serviceaccount'

$Password = 'SecurePassword'

$BackupPath = '\FS01.com.local\Temp'

$DatabaseName = 'DB01'

$AvailabilityGroupName = 'SQLAG01'

$AvailabilityGroupIP = '10.0.0.19'

#Enable Always On on SQL instances, typically done via SQL Server Configuration Manager

Enable-DbaAgHadr -SqlInstance $SqlInstances -Force | Format-Table

#Setup of the endpoints

New-DbaEndpoint -SqlInstance $SqlInstances -Name hadr_endpoint -Port 5022 | Start-DbaEndpoint | Format-Table

New-DbaLogin -SqlInstance $SqlInstances -Login "$DomainName\$SQLServerServiceAccount" | Format-Table

Invoke-DbaQuery -SqlInstance $SqlInstances -Query "GRANT CONNECT ON ENDPOINT::hadr_endpoint TO [$DomainName\$SQLServerServiceAccount]"

#Transfer databases to replica

$Database = Get-DbaDatabase -SqlInstance $SqlInstances[0] -Database $DatabaseName

$Database | Backup-DbaDatabase -Path $BackupPath -Type Database | Restore-DbaDatabase -SqlInstance $SqlInstances[1] -NoRecovery | Out-Null

$Database | Backup-DbaDatabase -Path $BackupPath -Type Log | Restore-DbaDatabase -SqlInstance $SqlInstances[1] -Continue -NoRecovery | Out-Null

#Create the availability group

$AvailabilityGroup = New-DbaAvailabilityGroup `

-Name $AvailabilityGroupName `

-Database $DatabaseName `

-ClusterType Wsfc `

-Primary $SqlInstances[0] `

-Secondary $SqlInstances[1] `

-SeedingMode Automatic `

-IPAddress $AvailabilityGroupIP `

-Confirm:$false

$AvailabilityGroup | Format-List

Get-DbaAgReplica -SqlInstance $SqlInstances[0] -AvailabilityGroup $AvailabilityGroupName | Format-Table

Get-DbaAgDatabase -SqlInstance $SqlInstances -AvailabilityGroup $AvailabilityGroupName -Database $DatabaseName | Format-Table