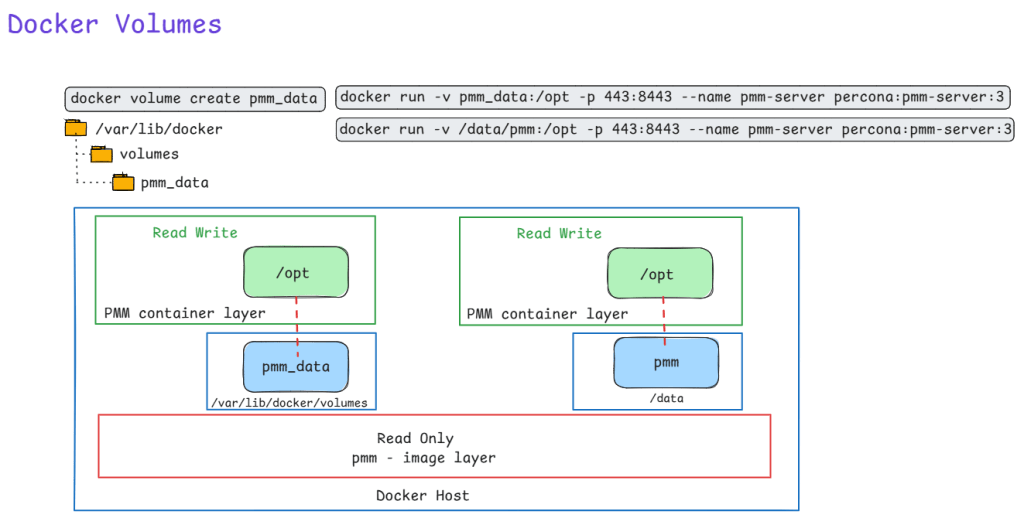

Here is an illustration of using docker volume to run PMM. Also shown is a constrast of using bind mount. Recommended method is to use docker volume with flag -v to persist data on the Host machine.

Ever

Ever been asked to pull a report of specific Azure VMs, their OS versions, who owns them, and exactly how much storage they are chewing up? Clicking through the Azure Portal to piece this together is a massive time sink.

This is where Azure Resource Graph (ARG) shines. I use the KQL query below whenever I need to quickly audit environments for cost allocation, cleanup projects, or basic inventory tracking.

Why this query is useful:

TotalCombinedStorageGB metric. It also safely handles edge cases, like VMs with zero data disks, without dropping them from the report.Owner tag and the OSVersion, allowing you to easily filter for specific teams or operating systems using fuzzy matches and lists.How to use it:

YOUR FILTERS section with your target OS and Owner names.

Resources

| where type =~ 'microsoft.compute/virtualmachines'

| extend VMName = tostring(name)

| extend Region = tostring(location)

| extend ResourceGroup = tostring(resourceGroup)

| extend OSType = tostring(properties.storageProfile.osDisk.osType)

| extend OSVersion = strcat(tostring(properties.storageProfile.imageReference.offer), " ", tostring(properties.storageProfile.imageReference.sku))

| extend Owner = tostring(tags['Owner'])

// --- YOUR FILTERS ---

| where OSVersion contains "<ENTER_OS_VERSION_HERE>"

// The in~ operator checks against a list of values

| where Owner in~ ("<OWNER_1>", "<OWNER_2>", "<OWNER_3>")

// --------------------

| extend OSDiskSizeGB = toint(properties.storageProfile.osDisk.diskSizeGB)

| extend OSDiskSizeGB = iff(isnull(OSDiskSizeGB), 0, OSDiskSizeGB)

| extend DataDisks = properties.storageProfile.dataDisks

| extend DataDisks = iff(isnull(DataDisks) or array_length(DataDisks) == 0, dynamic([{"diskSizeGB": 0}]), DataDisks)

| mv-expand DataDisks

| extend DataDiskSizeGB = toint(DataDisks.diskSizeGB)

| extend DataDiskSizeGB = iff(isnull(DataDiskSizeGB), 0, DataDiskSizeGB)

| summarize TotalDataDiskSizeGB = sum(DataDiskSizeGB) by id, VMName, ResourceGroup, subscriptionId, Region, OSType, OSVersion, Owner, OSDiskSizeGB

| extend TotalCombinedStorageGB = OSDiskSizeGB + TotalDataDiskSizeGB

| join kind=leftouter (

ResourceContainers

| where type =~ 'microsoft.resources/subscriptions'

| project subscriptionId, SubscriptionName = tostring(name)

) on subscriptionId

| project

VMName,

ResourceGroup,

SubscriptionName,

Region,

OSType,

OSVersion,

Owner,

OSDiskSizeGB,

TotalDataDiskSizeGB,

TotalCombinedStorageGB

| order by VMName asc

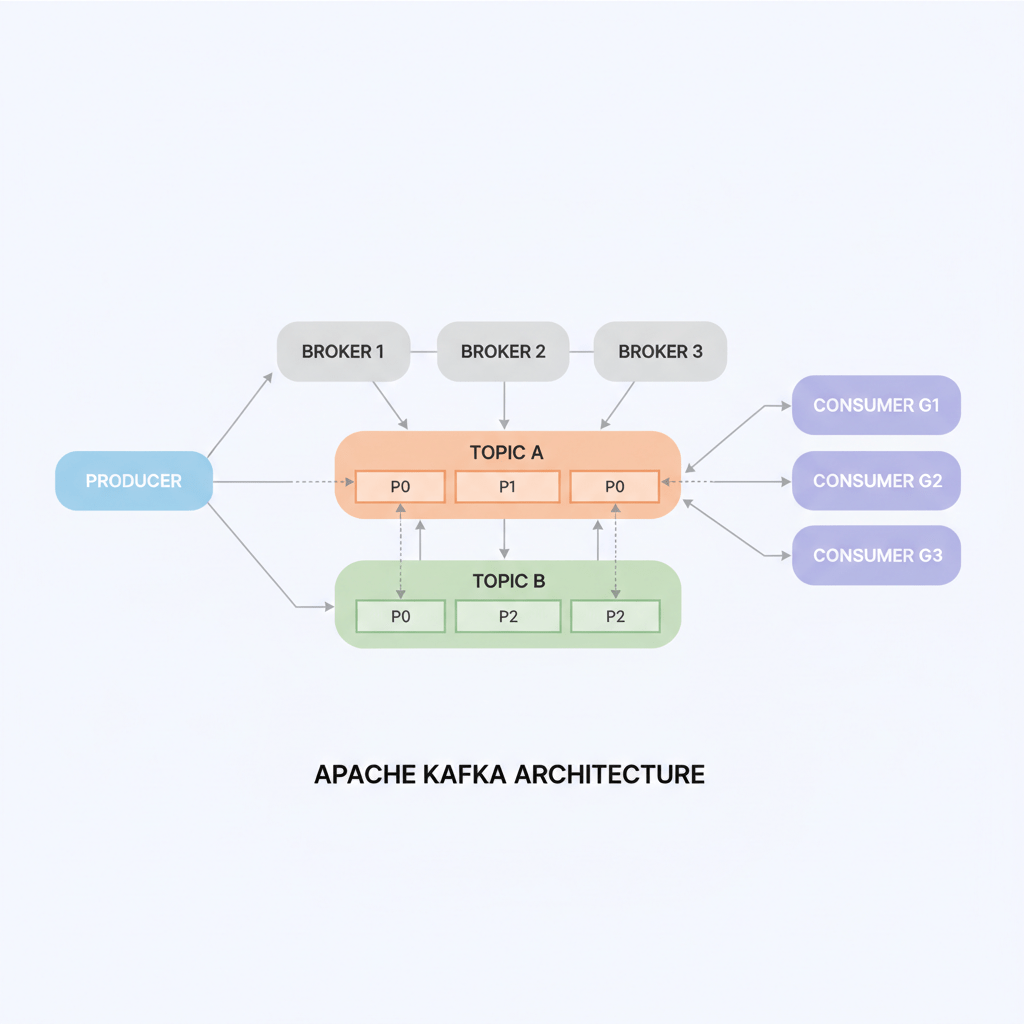

Apache Kafka is a powerhouse for real-time data streaming, acting like a superhighway for data that never sleeps. For database engineers dipping their toes into streaming, it’s your bridge from batch processing to instant insights.

Picture Kafka as a distributed post office for massive data volumes. Producers (apps sending data) drop messages into topics—logical channels like mailboxes. These topics split into partitions across brokers (Kafka servers) for scalability and parallelism. Consumers subscribe to topics, pulling messages at their pace, with replication ensuring no data loss even if servers fail.

(see the generated image above)

This setup delivers high throughput (millions of messages/second), low latency, and fault tolerance—perfect for evolving from SQL queries to event streams.

As DBAs, think JDBC/CDC plugins feeding Kafka for real-time replication, sidestepping laggy ETL jobs.

Kafka shines in durability (disk-backed logs for replays), pub-sub flexibility (one producer, many consumers), and seamless scaling.

(see the generated image above)

It integrates with your stack—PostgreSQL CDC to Kafka, then to Redshift or Elasticsearch—boosting monitoring like PMM/Grafana with live metrics.

In healthcare, Kafka streams patient vitals from wearables for instant alerts, aggregates EHR logs for fraud detection, or pipes CDC from hospital databases to analytics for outbreak tracking—all HIPAA-compliant with encryption and audits. For DB engineers, it’s CDC gold: capture changes from MySQL/SQL Server in real-time, feeding ML models without downtime, unlike traditional replication.

Inactive replication slots in Azure Database for PostgreSQL Flexible Server can silently fill your disk with WAL files. Here’s how to spot, drop, and prevent them.

Replication slots ensure WAL retention so consumers (CDC tools, read replicas) don’t miss changes. Inactive slots—created by stopped CDC jobs, deleted replicas, or failed experiments—pin old WAL indefinitely, consuming storage until it fills. Azure Flexible Server has safeguards like auto-grow, but slots can still cause outages.

Run these to identify storage hogs:

-- WAL retained by each slot (biggest first)

SELECT slot_name, plugin, slot_type, active,

pg_size_pretty(pg_wal_lsn_diff(restart_lsn, '0/0')) AS retained_wal

FROM pg_replication_slots

ORDER BY pg_wal_lsn_diff(restart_lsn, '0/0') DESC;

-- Current lag relative to WAL head

SELECT slot_name,

pg_size_pretty(pg_wal_lsn_diff(pg_current_wal_lsn(), restart_lsn)) AS lag_size

FROM pg_replication_slots;

-- Inactive slots only

SELECT * FROM pg_replication_slots WHERE NOT active;

-- Check active physical replication (HA/replicas)

SELECT pid, state, sent_lsn, replay_lsn, write_lag

FROM pg_stat_replication;

Focus on inactive logical slots (slot_type='logical', active=false).

Drop one by one—never active or Azure HA slots like azure_standby:

SELECT pg_drop_replication_slot('your_inactive_slot_name');

Storage recovers as WAL checkpoints recycle old segments (minutes to hours). Verify with Azure Metrics > Disk Used.

When an employee leaves or a service account is retired, it’s essential to remove their access cleanly and consistently from SQL Server.

Manually revoking access across multiple databases can be error-prone and time-consuming — especially in large environments.

In this post, we’ll look at how to use the dbatools PowerShell module to automatically remove a user from all databases (except system ones) and drop the server-level login, with full logging for audit purposes.

Install-Module dbatools -Scope CurrentUser -Force

<#

.SYNOPSIS

Removes a SQL Server login and its users from all user databases.

Works for both domain and SQL logins, with logging.

#>

param(

[Parameter(Mandatory = $true)]

[string]$SqlInstance,

[Parameter(Mandatory = $true)]

[string]$Login,

[string]$LogFile = "$(Join-Path $PSScriptRoot ("UserRemovalLog_{0:yyyyMMdd_HHmmss}.txt" -f (Get-Date)))"

)

if (-not (Get-Module -ListAvailable -Name dbatools)) {

Write-Error "Please install dbatools using: Install-Module dbatools -Scope CurrentUser -Force"

exit 1

}

function Write-Log {

param([string]$Message, [string]$Color = "White")

$timestamp = (Get-Date).ToString("yyyy-MM-dd HH:mm:ss")

$logEntry = "[$timestamp] $Message"

Write-Host $logEntry -ForegroundColor $Color

Add-Content -Path $LogFile -Value $logEntry

}

Write-Log "=== Starting cleanup for login: $Login on instance: $SqlInstance ===" "Cyan"

$UserDatabases = Get-DbaDatabase -SqlInstance $SqlInstance | Where-Object { -not $_.IsSystemObject }

foreach ($db in $UserDatabases) {

try {

$dbName = $db.Name

$user = Get-DbaDbUser -SqlInstance $SqlInstance -Database $dbName -User $Login -ErrorAction SilentlyContinue

if ($user) {

Write-Log "Removing user [$Login] from [$dbName]" "Red"

Remove-DbaDbUser -SqlInstance $SqlInstance -Database $dbName -User $Login -Confirm:$false -ErrorAction Stop

Write-Log "✅ Removed from [$dbName]" "Green"

}

else {

Write-Log "User [$Login] not found in [$dbName]" "DarkGray"

}

}

catch {

Write-Log "⚠️ Failed in [$dbName]: $_" "Yellow"

}

}

try {

$loginObj = Get-DbaLogin -SqlInstance $SqlInstance -Login $Login -ErrorAction SilentlyContinue

if ($loginObj) {

$loginType = $loginObj.LoginType

Write-Log "Removing server-level login [$Login] ($loginType)" "Red"

Remove-DbaLogin -SqlInstance $SqlInstance -Login $Login -Confirm:$false -ErrorAction Stop

Write-Log "✅ Server-level login removed" "Green"

}

else {

Write-Log "No server-level login [$Login] found" "DarkGray"

}

}

catch {

Write-Log "⚠️ Failed to remove login [$Login]: $_" "Yellow"

}

Write-Log "=== Completed cleanup for [$Login] on [$SqlInstance] ===" "Cyan"

Write-Log "Log file saved to: $LogFile" "Gray"

.\Remove-DbUserFromAllDatabases.ps1 -SqlInstance "SQLPROD01" -Login "Contoso\User123"

You can also specify a custom log path:

.\Remove-DbUserFromAllDatabases.ps1 -SqlInstance "SQLPROD01" -Login "appuser" -LogFile "C:\Logs\UserCleanup.txt"