Break down in plain language, building from basics to the complete picture.

What is Azure Private Link Service? (The Analogy)

Think of Azure Private Link Service like a private, secure doorway between two buildings:

- Building A (Snowflake’s building) is in a gated community that you don’t control

- Building B (Your Azure resources) is your own building

- You can’t build a bridge between the buildings (no VNet peering)

- You can’t use the public street (no internet for PHI data)

Azure Private Link Service creates a private tunnel through the ground (Azure’s backbone network) that only connects these two specific buildings, and nobody else can use it.

1. The Purpose of Azure Private Link Service

The Problem It Solves:

Imagine you have a service (like your MySQL database) running in your private Azure network. Normally, there are only two ways for external services to reach it:

- Public Internet: Open a door to the internet (❌ Not acceptable for PHI)

- VNet Peering: Directly connect networks (❌ Not possible with Snowflake’s multi-tenant setup)

The Solution:

Azure Private Link Service provides a third way:

- It creates a private endpoint that external services can connect to

- Traffic flows over Microsoft’s private backbone network (never touches the internet)

- The external service thinks it’s connecting to a simple endpoint

- Your service remains completely private within your VNet

Real-World Analogy:

It’s like having a private phone line installed between two companies:

- The caller (Snowflake) dials a special number (private endpoint)

- The call routes through a private telephone network (Azure backbone)

- It rings at your reception desk (Load Balancer)

- Your receptionist transfers it internally (to ProxySQL, then MySQL)

- No one can intercept the call or even know it’s happening

2. When is Azure Private Link Service Used?

Common Scenarios:

Scenario A: Third-Party SaaS Needs Private Access

- You use a SaaS product (like Snowflake, Databricks, or vendor tools)

- They need to access YOUR Azure resources

- You want zero public internet exposure

- ✅ Use Private Link Service

Scenario B: Customer Access to Your Services

- You provide a service to customers

- Customers are in their own Azure subscriptions

- You want to offer private connectivity

- ✅ Use Private Link Service

Scenario C: Cross-Region Private Access

- Your resources are in different Azure regions

- You want private connectivity without complex VNet peering chains

- ✅ Use Private Link Service

Your Specific Use Case:

You’re using it because:

- Snowflake is external (different Azure subscription, multi-tenant)

- MySQL is private (VNet-integrated, no public access)

- PHI compliance (must avoid public internet)

- No VNet peering option (Snowflake’s network architecture doesn’t support it)

3. How Azure Private Link Service Connects with External Services Securely

Let me walk through this step-by-step with your Snowflake scenario:

Step 1: You Create the Private Link Service

In your Azure subscription:

Your Actions:

├─ Create Standard Load Balancer

│ └─ Backend pool: ProxySQL VMs

├─ Create Private Link Service

│ ├─ Attach to Load Balancer's frontend IP

│ ├─ Generate a unique "alias" (like a secret address)

│ └─ Set visibility controls

What happens:

- Azure creates a special resource that “wraps” your Load Balancer

- It generates an alias – think of this as a secret, unique address like:

mycompany-mysql-pls.abc123.azure.privatelinkservice

- This alias is how external services find your Private Link Service

Step 2: You Share the Alias with Snowflake

You give Snowflake two things:

- The alias (the secret address)

- Permission (you’ll approve their connection request)

Important: The alias itself doesn’t grant access – it’s just the address. Think of it like knowing someone’s phone number doesn’t mean they’ll accept your call.

Step 3: Snowflake Creates a Private Endpoint in Their Network

In Snowflake’s Azure environment (which you don’t control):

Snowflake's Actions:

├─ Create Private Endpoint in their VNet

│ └─ Target: Your Private Link Service alias

├─ This generates a connection request

└─ Connection shows as "Pending" (waiting for your approval)

What happens:

- Snowflake creates a network interface in THEIR VNet

- This interface gets a PRIVATE IP address (like 10.x.x.x) in their network

- Azure knows this interface wants to connect to YOUR Private Link Service

- But it can’t connect yet – you must approve it

Step 4: You Approve the Connection

In your Azure portal:

You see:

├─ Connection Request from Snowflake

│ ├─ Shows their subscription ID

│ ├─ Shows requested resource (your Private Link Service)

│ └─ Status: "Pending"

└─ You click: "Approve"

What happens:

- Azure creates a secure tunnel through its backbone network

- This tunnel connects Snowflake’s private endpoint to your Load Balancer

- The tunnel is encrypted and isolated at the network fabric level

Step 5: The Connection is Established

Now the complete path exists:

Snowflake OpenFlow

↓

Connects to: 10.x.x.x (private IP in Snowflake's VNet)

↓

[AZURE BACKBONE - ENCRYPTED TUNNEL]

↓

Arrives at: Your Load Balancer (in your VNet)

↓

Routes to: ProxySQL → MySQL

4. How the Secure Connection Actually Works (The Technical Magic)

Layer 1: Network Isolation

Physical Level:

- Azure’s network uses software-defined networking (SDN)

- Your traffic gets VXLAN encapsulation (a tunnel within the network)

- It’s tagged with your specific Private Link connection ID

- Other tenants’ traffic cannot see or intercept it

Analogy: It’s like sending a letter inside a locked box, which is then placed inside another locked box, with special routing instructions that only the Azure network fabric can read.

Layer 2: No Public IP Addresses

Key Point: Neither end has a public IP address

Snowflake side:

├─ Private Endpoint: 10.0.5.20 (private IP)

└─ NOT exposed to internet

Your side:

├─ Load Balancer: 10.1.2.10 (private frontend IP)

└─ NOT exposed to internet

Why this matters:

- No IP address exists that could be attacked from the Internet

- Port scanners can’t find it

- DDoS attacks can’t target it

Layer 3: Azure Backbone Routing

The path traffic takes:

- Snowflake’s VM sends packets to its private endpoint (10.0.5.20)

- Azure SDN intercepts these packets

- Encapsulation happens: packet gets wrapped in Azure’s internal routing protocol

- Backbone transit: Travels through Microsoft’s private fiber network

- Decapsulation: Arrives at your Private Link Service

- Delivery: Forwarded to your Load Balancer

The security here:

- Traffic never routes through public internet routers

- Doesn’t traverse untrusted networks

- Uses Microsoft’s dedicated fiber connections

- Encrypted at the transport layer

Layer 4: Encryption in Transit

Even though it’s on a private network, data is still encrypted:

Application Layer (MySQL):

└─ TLS/SSL encryption (MySQL protocol with SSL)

└─ Your ProxySQL and MySQL enforce encrypted connections

Transport Layer:

└─ Private Link provides network-level isolation

└─ Even if someone accessed the physical network, data is encrypted

5. How We Ensure Azure Private Link Service is Secure

Let me give you a comprehensive security checklist:

A. Access Control (Who Can Connect?)

1. Approval Requirement

Setting: Auto-approval vs Manual approval

Your choice: MANUAL APPROVAL ✓

Why:

- You explicitly approve each connection request

- See who's requesting (subscription ID, service name)

- Can reject suspicious requests

- Can revoke access anytime

Action Item: Always use manual approval for production PHI data.

2. Visibility Settings

Options:

├─ Role-Based (Subscription): Only specific subscriptions can see your service

├─ Role-Based (Explicit list): You list exact subscription IDs

└─ Public: Anyone can request (you still approve)

Your choice: Role-Based with Snowflake's subscription ID ✓

Why:

- Limits who can even discover your Private Link Service

- Reduces surface area for connection attempts

Action Item: Configure visibility to allow ONLY Snowflake’s Azure subscription ID.

3. Connection State Monitoring

Monitor:

├─ Active connections (who's connected right now)

├─ Pending requests (who's trying to connect)

├─ Rejected connections (what you've denied)

└─ Connection history (audit trail)

Action Item: Set up Azure Monitor alerts for:

- New connection requests

- Approved connections

- Connection removals

B. Network Security (Traffic Control)

1. Network Security Groups (NSGs)

On your Load Balancer subnet:

Inbound Rules:

├─ ALLOW: From Private Link Service (Azure service tag)

│ └─ Port: 3306 (MySQL)

├─ DENY: Everything else

└─ Priority: Specific to general

Why:

- Even though traffic comes via Private Link, add NSG layer

- Defense in depth principle

- Logs any unexpected traffic patterns

2. Load Balancer Restrictions

Load Balancer Configuration:

├─ Frontend: INTERNAL only (not public)

├─ Backend pool: Only specific ProxySQL VMs

├─ Health probes: Ensure only healthy backends receive traffic

└─ Session persistence: Configure based on OpenFlow needs

Action Item: Use Internal Load Balancer (not public-facing).

3. ProxySQL VM Security

ProxySQL VM:

├─ No public IP address ✓

├─ NSG allows ONLY from Load Balancer subnet ✓

├─ OS firewall (firewalld/iptables) allows only port 3306 ✓

├─ Managed identity for authentication (no passwords) ✓

└─ Regular security patching ✓

C. Data Security (Protecting the Payload)

1. Force TLS/SSL Encryption

On MySQL Flexible Server:

-- Require SSL connections

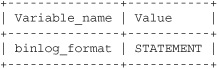

REQUIRE SSL;

-- Verify setting

SHOW VARIABLES LIKE 'require_secure_transport';

-- Should return: ON

On ProxySQL:

-- Configure SSL for backend connections

UPDATE mysql_servers

SET use_ssl=1

WHERE hostgroup_id=0;

-- Force SSL for users

UPDATE mysql_users

SET use_ssl=1;

Action Item: Never allow unencrypted MySQL connections, even over Private Link.

2. Certificate Validation

MySQL SSL Configuration:

├─ Use Azure-managed certificates

├─ Enable certificate validation

├─ Rotate certificates regularly

└─ Monitor certificate expiration

ProxySQL Configuration:

├─ Validate MySQL server certificates

├─ Use proper CA bundle

└─ Log SSL handshake failures

D. Authentication & Authorization

1. MySQL User Permissions

Create a dedicated user for Snowflake OpenFlow:

-- Create dedicated user

CREATE USER 'snowflake_cdc'@'%'

IDENTIFIED WITH mysql_native_password BY 'strong_password'

REQUIRE SSL;

-- Grant ONLY necessary permissions

GRANT SELECT, REPLICATION SLAVE, REPLICATION CLIENT

ON your_database.*

TO 'snowflake_cdc'@'%';

-- Restrict to specific tables if possible

GRANT SELECT

ON your_database.specific_table

TO 'snowflake_cdc'@'%';

Key Principle: Least privilege – only grant what OpenFlow needs for CDC.

2. ProxySQL Authentication Layer

-- ProxySQL can add another auth layer

-- Different credentials externally vs internally

INSERT INTO mysql_users (

username,

password,

default_hostgroup,

max_connections

) VALUES (

'snowflake_cdc',

'external_password',

0, -- backend hostgroup

100 -- connection limit

);

Benefit: You can change backend MySQL credentials without updating Snowflake.

E. Monitoring & Auditing (Detect Issues)

1. Azure Monitor Logs

Enable diagnostics on:

├─ Private Link Service

│ └─ Log: Connection requests, approvals, data transfer

├─ Load Balancer

│ └─ Metrics: Connection count, bytes transferred, health status

├─ Network Security Groups

│ └─ Flow logs: All traffic patterns

└─ ProxySQL VM

└─ Azure Monitor Agent: OS and application logs

2. MySQL Audit Logging

-- Enable audit log

SET GLOBAL audit_log_policy = 'ALL';

-- Monitor for:

├─ Failed authentication attempts

├─ Unusual query patterns

├─ Large data exports

└─ Access outside business hours

3. Alert on Anomalies

Set up alerts for:

├─ New Private Link connection requests

├─ High data transfer volumes (possible data exfiltration)

├─ Failed authentication attempts

├─ ProxySQL health check failures

├─ Load Balancer backend unhealthy

└─ NSG rule matches (blocked traffic attempts)

F. Compliance & Governance (PHI Requirements)

1. Data Residency

Ensure:

├─ Private Link Service: Same region as MySQL

├─ Load Balancer: Same region

├─ ProxySQL VMs: Same region

└─ Snowflake region: Appropriate for your PHI requirements

Why: Minimize data travel, maintain compliance boundaries

2. Encryption at Rest

MySQL Flexible Server:

├─ Enable: Customer-managed keys (CMK) with Azure Key Vault

├─ Rotate: Keys regularly

└─ Audit: Key access logs

Why: PHI must be encrypted at rest

3. Access Logs & Retention

Retention policy:

├─ Connection logs: 90 days minimum

├─ Query audit logs: Per HIPAA requirements

├─ NSG flow logs: 30 days minimum

└─ Azure Activity logs: 90 days minimum

Why: Compliance audits, incident investigation

6. Security Validation Checklist

Before going live, verify:

Pre-Production Checklist:

☐ Private Link Service visibility: Restricted to Snowflake subscription

☐ Manual approval: Enabled (no auto-approval)

☐ NSG rules: Properly configured on all subnets

☐ Load Balancer: Internal only (no public IP)

☐ ProxySQL VMs: No public IPs, managed identities configured

☐ MySQL SSL: Required for all connections

☐ Certificate validation: Enabled

☐ Least privilege: MySQL user has minimal permissions

☐ Audit logging: Enabled on MySQL and ProxySQL

☐ Azure Monitor: Diagnostic logs enabled on all components

☐ Alerts: Configured for security events

☐ Network flow logs: Enabled for NSGs

☐ Encryption at rest: Customer-managed keys configured

☐ Backup encryption: Verified

☐ Disaster recovery: Tested with Private Link maintained

☐ Documentation: Architecture diagram, runbooks created

☐ Incident response: Plan includes Private Link scenarios

Ongoing Security Practices:

☐ Weekly: Review connection logs

☐ Weekly: Check for pending connection requests

☐ Monthly: Audit MySQL user permissions

☐ Monthly: Review NSG rules for drift

☐ Quarterly: Certificate expiration check

☐ Quarterly: Penetration testing (authorized)

☐ Annually: Full security audit

☐ Continuous: Monitor alerts in real-time

Common Security Concerns & Answers

Q: Can someone intercept traffic on the Azure backbone?

A: No, for multiple reasons:

- Network isolation: Your Private Link traffic is isolated using VXLAN tunneling

- Logical separation: Azure’s SDN ensures tenant isolation at the hypervisor level

- Encryption: Even if someone accessed the physical layer (impossible), MySQL traffic is TLS-encrypted

- Microsoft’s security: Azure backbone is not accessible to customers or internet

Q: How do I know Snowflake is really who they say they are?

A: Multiple verification layers:

- Subscription ID: You see Snowflake’s Azure subscription ID in the connection request

- Manual approval: You explicitly approve the connection

- Authentication: Even after the connection, Snowflake must authenticate to MySQL

- Certificate validation: TLS certificates verify server identity

- Coordination: You coordinate with Snowflake support to verify the timing of the connection

Q: What if someone compromises Snowflake’s environment?

A: Defense in depth protects you:

- Authentication required: Attacker still needs MySQL credentials

- Least privilege: CDC user can only SELECT and read binlog, cannot modify data

- Audit logging: Any unusual activity is logged

- Revocable access: You can instantly reject/delete the Private Link connection

- Network monitoring: Unusual data transfer patterns trigger alerts

Q: Is Private Link more secure than VPN or ExpressRoute?

A: Yes, for several reasons:

- No customer routing: You don’t manage routes or BGP

- No gateway management: No VPN gateways to patch/secure

- No shared circuits: Unlike ExpressRoute, Private Link is point-to-point

- Simpler security model: Less complexity = fewer vulnerabilities

- Azure-managed: Microsoft handles underlying infrastructure security

Summary: The Security Model

Azure Private Link Service creates a multi-layered secure connection:

Security Layers (from outer to inner):

│

├─ Layer 1: Access Control

│ └─ Manual approval, subscription restrictions

│

├─ Layer 2: Network Isolation

│ └─ Private IPs, no internet exposure, Azure backbone

│

├─ Layer 3: Traffic Control

│ └─ NSGs, Load Balancer rules, firewall policies

│

├─ Layer 4: Encryption in Transit

│ └─ TLS/SSL for MySQL protocol

│

├─ Layer 5: Authentication

│ └─ MySQL user credentials, certificate validation

│

├─ Layer 6: Authorization

│ └─ Least privilege MySQL permissions

│

├─ Layer 7: Monitoring & Auditing

│ └─ Logs, alerts, anomaly detection

│

└─ Layer 8: Encryption at Rest

└─ Customer-managed keys for MySQL data

Each layer provides protection, so even if one layer is compromised, others maintain security.